Julien Pansiot

Starting Researcher @ Inria Grenoble

Human motion capture and medical imaging

News

HackAtech Inria Grenoble - 19-21 January

I'm currently co-organising Inria Grenoble's hackAtech, a cross-over between a hackathon and a startup week-end.

The event will be focused on image technolgies (VR, AR, MoCap, AI, ...) with the aim to impulse or boost innovative projets and maybe initiate startups.

Open too all, please join us!

Summary

Research interests

My main research interests lies in human motion capture, with an inclination to multi-modal sensing.

My main research interests lies in human motion capture, with an inclination to multi-modal sensing.

Human motion capture is an exciting research topic given the complex nature of the human body and the large number of high-impact applications in medicine, sports science, biology, and media production.

Multi-modal approaches have often gained my favour because they allow to exploit the complementarity of different sensing modalities to achieve more robust results. This is actually a very natural approach, as for example the human sense of balance is based on a combination of visual (eyes), inertial (vestibular system), and pressure/force (proprioception) cues.

Current position

I am currently research engineer at Inria, on the Kinovis platform.

Recent publications

- J. Pansiot and E. Boyer. CBCT of a Moving Sample from X-rays and Multiple Videos. TMI 2019.

- J. Pansiot and E. Boyer. CT from Motion: Volumetric Capture of Moving Shapes with X-rays and Videos. BMVC 2017.

- J. Pansiot and E. Boyer. 3D Imaging from Video and Planar Radiography. MICCAI 2016.

Current funding

- I am involved in the ANR Equipex+ grant "Continuum" as PI for Inria.

- I am involved in an ANR Collaborative Research Project (PRC) grant "INORA" as PI for Inria.

Past funding

- I have been funded by an Inria Starting Research Position (6 positions/year nationally).

- I have received an ANR Collaborative Research Project with Industrials (PRCE) grant: "CaMoPi" Capture and Modelling of the Shod Foot in Motion. Scientific Coordinator & PI, collaboration with Mines St Etienne / CIS, CTC, Sporaltec. Budget: 541k€ (success rate: 12.5%).

- I was involved in the starting FUI24 "SPINE PDCA" project. PI for Inria, collaboration with Surgivisio, EOS Imaging, APHP Trousseau, CHU Grenoble. Budget: 2.1M€. More details to come soon.

- I was heavily involved in the PERSYVAL LabEx exploratory project "Carambole", aiming at tracking 4D deformation of growing plantes. Co-author, collaboration with MSC/Paris 7. Budget: 9k€.

Selected Projects

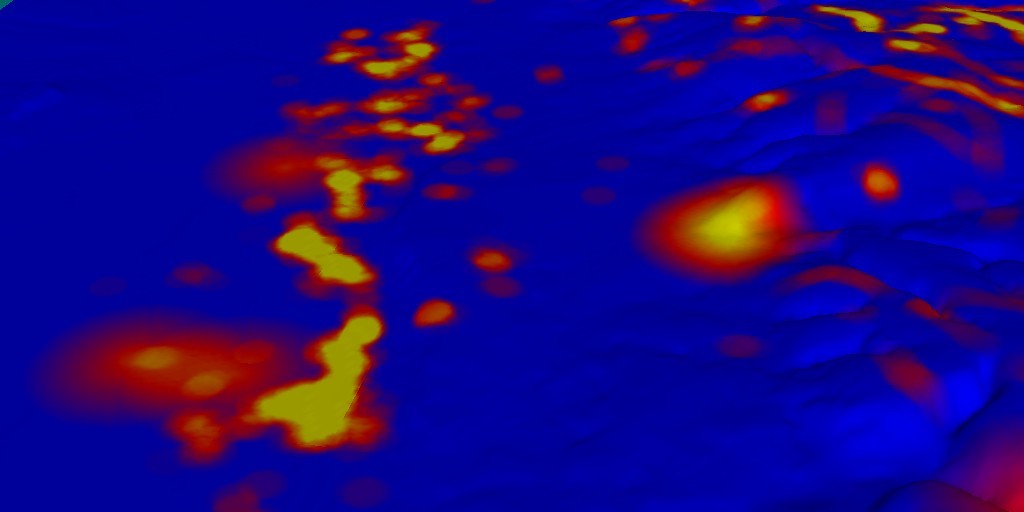

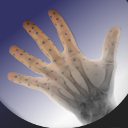

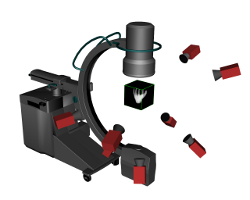

Combined Video and X-Ray 3D Imaging

Starting Researcher @ Inria Grenoble / Morpheo | 2013-current

Computed Tomography (CT) scanners provide accurate 3D X-ray attenuation models of living organisms, allowing for a range of studies and diagnostics. However, when compared to regular planar radiography, CT scanners are significantly more expensive, expose the patient to higher radiation doses, and require immobility.

Computed Tomography (CT) scanners provide accurate 3D X-ray attenuation models of living organisms, allowing for a range of studies and diagnostics. However, when compared to regular planar radiography, CT scanners are significantly more expensive, expose the patient to higher radiation doses, and require immobility.

In order to tackle these issues, we proposed to combine a regular X-ray imaging device with a set of cameras [2] [5] [6] [8]. A software suite which estimates a dense 3D attenuation model of a moving sample based on a short capture by a single X-ray imaging device combined with 10 video cameras has been deployed on the novel KINOVIS platform recently installed at the Grenoble University Hospital (CHU).

Free-viewpoint Video Rendering

Technologist @ BBC R&D / Production Magic | 2011-2013

Dynamic scenes captured simultaneously from multiple cameras can be rendered with the traditional garphics pipeline, i.e. using a single baked texture mapped over a reconstructed mesh. However, significantly more anisotropic details can be rendered by using all originally captured video streams. For this purpose, the original images are fused at run-time based on the currently required viewpoint. In the context of the FP7 RE@CT project (capture, modelling, and 4D rendering) with the BBC, I have developped a real-time, view-dependent rendering engine from multiple views to replace previous software. The module has been implemented as both a standalone and an OpenSceneGraph library plugin, and defines a file format for multi-view 4D sequences. It also allowed to replace tracked proxy-props (such as fake swords) by virtual models.

I have also worked on WebGL-based applications [9], [13], as well as real-time free-viewpoint rendering from multiple views [12].

Real-time sports pitch camera pose estimation system

Technologist @ BBC R&D / Production Magic | 2012-2013

In order to allow for live 2D and 3D effects on moving and zooming sports broadcast cameras, an automated and real-time pose and focal length estimation system was developed. For this purpose, the software used natural features such as pitch lines and the calibration core engine described hereafter. The software comprises the following three modules: sports pitch lines detection and labeling, real-time camera pose estimation engine, and GUI for parameter settings and feedback.

In order to allow for live 2D and 3D effects on moving and zooming sports broadcast cameras, an automated and real-time pose and focal length estimation system was developed. For this purpose, the software used natural features such as pitch lines and the calibration core engine described hereafter. The software comprises the following three modules: sports pitch lines detection and labeling, real-time camera pose estimation engine, and GUI for parameter settings and feedback.

Flexible multiview calibration system

Technologist @ BBC R&D / Production Magic | 2011-2012

In order to avoid the use of large and cumbersome checker calibration charts for multiple camera calibration in large working volumes, a new system based on a lightweight LED wand was designed, developed, and deployed at the BBC. This system was part of a multiple-view capture, 3D reconstruction, and rendering pipeline. It comprises a 12-LED wireless calibration wand, a real-time LED detection and labeling software, and the actual camera parameter estimation. Furthermore, a plugin for the capture module has been developed, which provides real-time feedback during acquisition. I have also worked specifically on the calibration of rolling shutter CMOS cameras [11].

In order to avoid the use of large and cumbersome checker calibration charts for multiple camera calibration in large working volumes, a new system based on a lightweight LED wand was designed, developed, and deployed at the BBC. This system was part of a multiple-view capture, 3D reconstruction, and rendering pipeline. It comprises a 12-LED wireless calibration wand, a real-time LED detection and labeling software, and the actual camera parameter estimation. Furthermore, a plugin for the capture module has been developed, which provides real-time feedback during acquisition. I have also worked specifically on the calibration of rolling shutter CMOS cameras [11].

Light-Weight Wheelchair Inertial Motion capture

Research associate @ Imperial College London / Visual Information Processing | 2010-2011

A light-weight sensor composed of an accelerometer and gyroscope was mounted in the wheel of a wheelchair for motion tracking in real-time with wireless feedback to the sports coach. Based on a physical model of the wheelchair intrinsic and global motion, the fusion of several sensors readings with a Kalman filter provides a robust motion estimation, with limited drift over time. The final solution enabled accurate displacement estimation at a limited cost, weight, and installation complexity [3]. The system was trialled by the British Paralympic Basketball team.

I have also worked on a number of other sports such as swimming [16], rowing [19], running [20], climbing [22], and speed-skating.

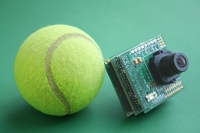

Autonomous Tennis Player Tracking

PhD candidate @ Imperial College London / Visual Information Processing | 2008-2009

While at Imperial College London, I have participated in the development of an autonomous, real-time, wireless tennis player tracker using computer vision [17] in collaboration with the Lawn Tennis Association (LTA). The hardware was based on a custom-built ARM-like architecture encompassing a camera and a wireless link. I worked on the firmware for autonomous tennis player tracking. It consisted of the following modules: tennis court line detection, camera calibration, background segmentation, estimation of the player on court, and micro web server for direct access.

While at Imperial College London, I have participated in the development of an autonomous, real-time, wireless tennis player tracker using computer vision [17] in collaboration with the Lawn Tennis Association (LTA). The hardware was based on a custom-built ARM-like architecture encompassing a camera and a wireless link. I worked on the firmware for autonomous tennis player tracking. It consisted of the following modules: tennis court line detection, camera calibration, background segmentation, estimation of the player on court, and micro web server for direct access.

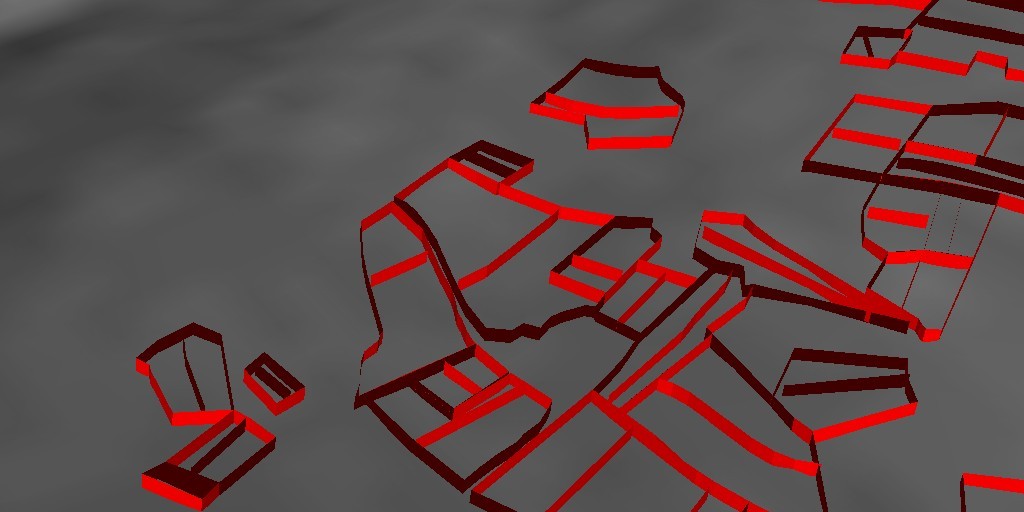

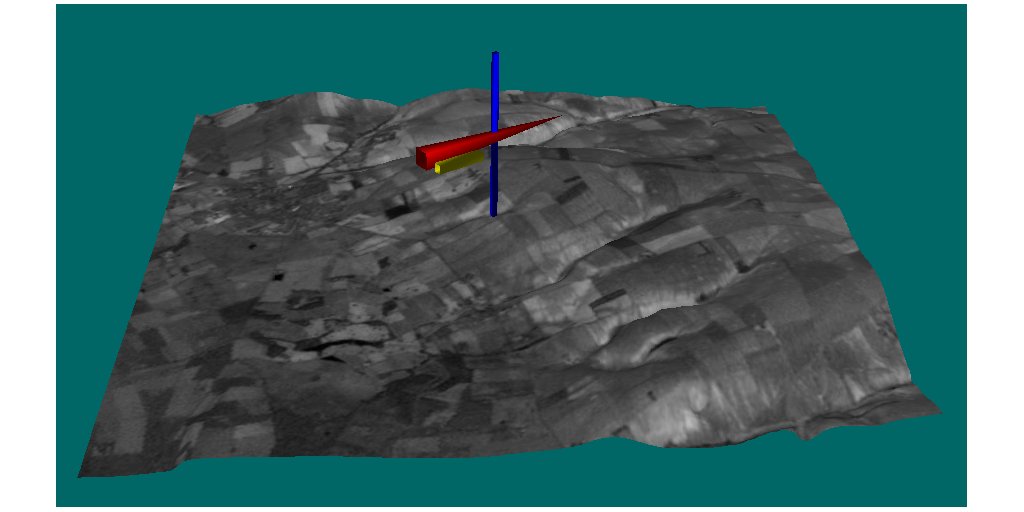

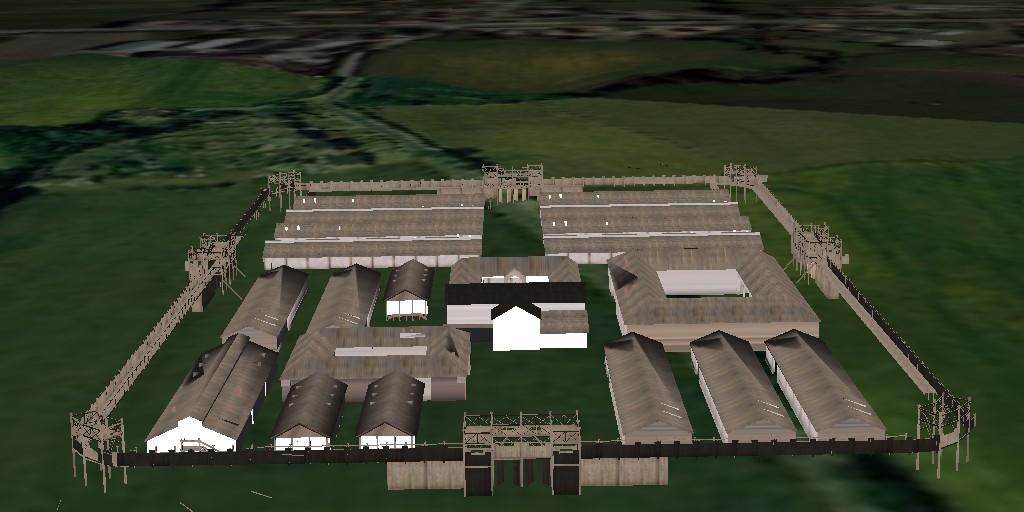

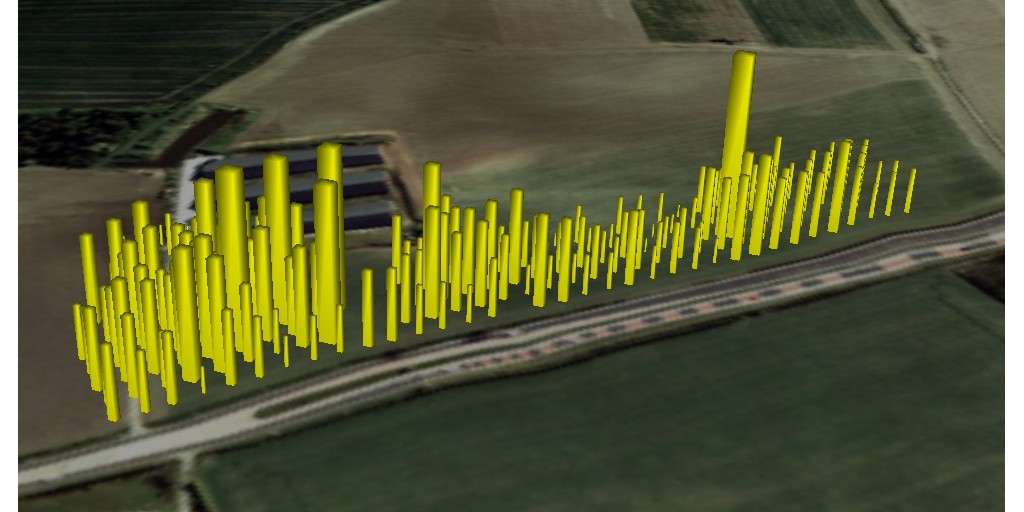

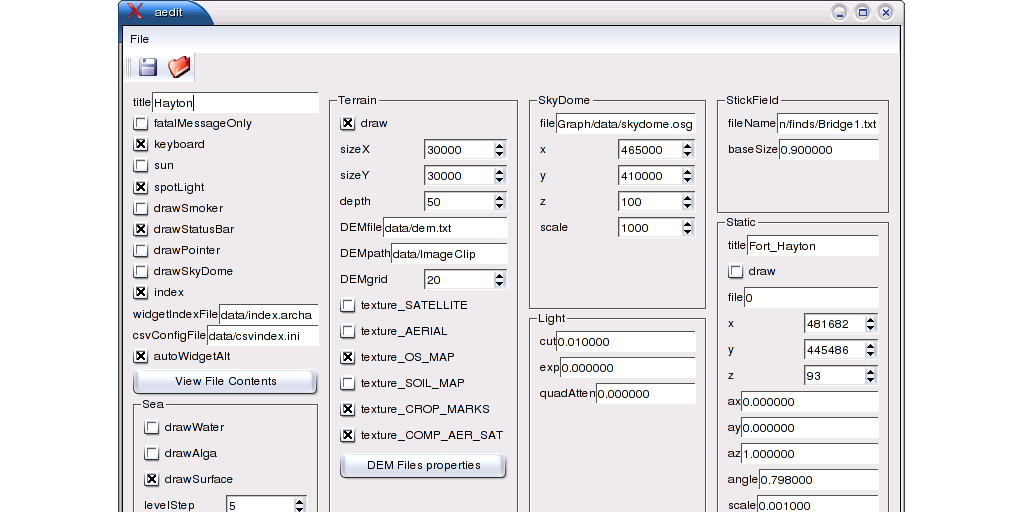

Immersive visualisation of archeological data

MSc by Research @ The University of Hull / SimVis | 2003-2004

I have worked on an immersive visualization and interaction of multidimensional archaeological data - Digital Archaeological Virtual Environment (DAVE). The aim was to help archaeologists to classify various kinds of data (text, 3D objects, sketches, images, video, ...) by rendering them onto a 3D terrain model with multiple overlays (aerial photography, maps, ...) [29].

Various options were included to visualise the scene under different perspectives (eg. rendering an archaeological findings density map, extrapolating the crop marks as walls, changing the sea level, ...). The application rendered on a large back-projected wall screen (6x3m), with a wireless joystick and a tracked glove to enable interaction within the scene.

Publications

Journal publications

2023

[1] Matthieu Armando, Laurence Boissieux, Edmond Boyer, Jean-Sébastien Franco, Martin Humenberger, Christophe Legras, Vincent Leroy, Mathieu Marsot, Julien Pansiot, Sergi Pujades, Rim Rekik, Grégory Rogez, Anilkumar Swamy, and Stefanie Wuhrer. 4DHumanOutfit: A multi-subject 4D dataset of human motion sequences in varying outfits exhibiting large displacements. Computer Vision and Image Understanding. 237(None):pages 103836, 2023. [ bib | http ]

[1] Matthieu Armando, Laurence Boissieux, Edmond Boyer, Jean-Sébastien Franco, Martin Humenberger, Christophe Legras, Vincent Leroy, Mathieu Marsot, Julien Pansiot, Sergi Pujades, Rim Rekik, Grégory Rogez, Anilkumar Swamy, and Stefanie Wuhrer. 4DHumanOutfit: A multi-subject 4D dataset of human motion sequences in varying outfits exhibiting large displacements. Computer Vision and Image Understanding. 237(None):pages 103836, 2023. [ bib | http ]2019

[2] Julien Pansiot and Edmond Boyer. CBCT of a Moving Sample from X-rays and Multiple Videos. IEEE Transactions on Medical Imaging (TMI). 38(2):pages 383-393, Februray 2019. [ bib | http | pdf ]

[2] Julien Pansiot and Edmond Boyer. CBCT of a Moving Sample from X-rays and Multiple Videos. IEEE Transactions on Medical Imaging (TMI). 38(2):pages 383-393, Februray 2019. [ bib | http | pdf ]2011

[3] Julien Pansiot, Zhiqiang Zhang, Benny Lo, and Guang-Zhong Yang. WISDOM: Wheelchair Inertial Sensors for Displacement and Orientation Monitoring. Measurement Science and Technology. 22(10):pages 105801, 2011. [ bib | http | pdf | video ]

[3] Julien Pansiot, Zhiqiang Zhang, Benny Lo, and Guang-Zhong Yang. WISDOM: Wheelchair Inertial Sensors for Displacement and Orientation Monitoring. Measurement Science and Technology. 22(10):pages 105801, 2011. [ bib | http | pdf | video ]2008

[4] Omer Aziz, Benny Lo, Julien Pansiot, Louis Atallah, Guang-Zhong Yang, and Ara Darzi. From computers to ubiquitous computing by 2010: health care. Philosophical Transactions of The Royal Society A. 366(1881):pages 3805-3811, 2008. [ bib | http | pdf ]

[4] Omer Aziz, Benny Lo, Julien Pansiot, Louis Atallah, Guang-Zhong Yang, and Ara Darzi. From computers to ubiquitous computing by 2010: health care. Philosophical Transactions of The Royal Society A. 366(1881):pages 3805-3811, 2008. [ bib | http | pdf ]Conference publications

2017

[5] Julien Pansiot and Edmond Boyer. CT from Motion: Volumetric Capture of Moving Shapes with X-rays and Videos. In British Machine Vision Conference (BMVC), London, September 2017. [ bib | http | pdf | video ] > More details

[5] Julien Pansiot and Edmond Boyer. CT from Motion: Volumetric Capture of Moving Shapes with X-rays and Videos. In British Machine Vision Conference (BMVC), London, September 2017. [ bib | http | pdf | video ] > More details2016

[6] Julien Pansiot and Edmond Boyer. 3D Imaging from Video and Planar Radiography. In International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI) - Part III, pages 450-457, Athens, October 2016. (oral presentation) [ bib | http | pdf | video ] > More details

[6] Julien Pansiot and Edmond Boyer. 3D Imaging from Video and Planar Radiography. In International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI) - Part III, pages 450-457, Athens, October 2016. (oral presentation) [ bib | http | pdf | video ] > More details2015

[7] Martin, Olivier A.J., Pansiot, Julien, Reveret, Lionel, Pusch, Andreas, and Pagnon, David. Video-based body shape capture for movement study - Application to a walking sequence. In Conference of the International Society for Posture and Gait Research (ISPGR), Séville, Spain, June 2015. [ bib | http ]

[7] Martin, Olivier A.J., Pansiot, Julien, Reveret, Lionel, Pusch, Andreas, and Pagnon, David. Video-based body shape capture for movement study - Application to a walking sequence. In Conference of the International Society for Posture and Gait Research (ISPGR), Séville, Spain, June 2015. [ bib | http ]2014

[8] Julien Pansiot, Lionel Reveret, and Edmond Boyer. Combined Visible and X-Ray 3D Imaging. In Medical Image Understanding and Analysis (MIUA), pages 13-18, London, July 2014. [ bib | http | pdf ] > More details

[8] Julien Pansiot, Lionel Reveret, and Edmond Boyer. Combined Visible and X-Ray 3D Imaging. In Medical Image Understanding and Analysis (MIUA), pages 13-18, London, July 2014. [ bib | http | pdf ] > More details2013

[9] Jeni Maleshkova, Matthew Purver, Oliver Grau, and Julien Pansiot. Presentation and Communication of Visual Artworks in an Interactive Virtual Environment. In SIGGRAPH Asia 2013 Posters, pages 37:1, Hong Kong, November 2013. [ bib | http | pdf ]

[9] Jeni Maleshkova, Matthew Purver, Oliver Grau, and Julien Pansiot. Presentation and Communication of Visual Artworks in an Interactive Virtual Environment. In SIGGRAPH Asia 2013 Posters, pages 37:1, Hong Kong, November 2013. [ bib | http | pdf ] [10] Pauline Provini, Julien Pansiot, Lionel Reveret, and Olivier Martin. Video-based methodology for markerless human motion analysis. In 15ème Congrès international de l'Association des Chercheurs en Activités Physiques et Sportives (ACAPS), Grenoble, October 2013. [ bib | http | pdf ]

[10] Pauline Provini, Julien Pansiot, Lionel Reveret, and Olivier Martin. Video-based methodology for markerless human motion analysis. In 15ème Congrès international de l'Association des Chercheurs en Activités Physiques et Sportives (ACAPS), Grenoble, October 2013. [ bib | http | pdf ]2012

[11] Oliver Grau and Julien Pansiot. Motion and velocity estimation of rolling shutter cameras. In ACM Proceedings of the 9th Conference on Visual Media Production (CVMP), pages 94-98, London, 2012. [ bib | http | pdf ]

[11] Oliver Grau and Julien Pansiot. Motion and velocity estimation of rolling shutter cameras. In ACM Proceedings of the 9th Conference on Visual Media Production (CVMP), pages 94-98, London, 2012. [ bib | http | pdf ] [12] Marco Volino, Julien Pansiot, Oliver Grau, and Adrian Hilton. Layered View-dependent Texture Maps. In Proceedings of the 9th Conference on Visual Media Production (CVMP short papers), London, 2012. [ bib | pdf ]

[12] Marco Volino, Julien Pansiot, Oliver Grau, and Adrian Hilton. Layered View-dependent Texture Maps. In Proceedings of the 9th Conference on Visual Media Production (CVMP short papers), London, 2012. [ bib | pdf ] [13] Jeni Maleshkova, Julien Pansiot, and Oliver Grau. Through the painter's eye: interactive 3D presentation of paintings on the web. In Proceedings of the 9th Conference on Visual Media Production (CVMP short papers), London, 2012. [ bib | pdf ]

[13] Jeni Maleshkova, Julien Pansiot, and Oliver Grau. Through the painter's eye: interactive 3D presentation of paintings on the web. In Proceedings of the 9th Conference on Visual Media Production (CVMP short papers), London, 2012. [ bib | pdf ]2011

[14] Zhiqiang Zhang, Julien Pansiot, Benny Lo, and Guang-Zhong Yang. Human Back Movement Analysis Using BSN. In 8th International Conference on Body Sensor Networks (BSN), Dallas, Texas, 2011. [ bib | http | pdf ]

[14] Zhiqiang Zhang, Julien Pansiot, Benny Lo, and Guang-Zhong Yang. Human Back Movement Analysis Using BSN. In 8th International Conference on Body Sensor Networks (BSN), Dallas, Texas, 2011. [ bib | http | pdf ]2010

[15] Douglas Gavin McIlwraith, Julien Pansiot, and Guang-Zhong Yang. Wearable and Ambient Sensor Fusion for the Characterisation of Human Motion. In IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pages 5505-5510, Taiwan, October 2010. [ bib | http | pdf ]

[15] Douglas Gavin McIlwraith, Julien Pansiot, and Guang-Zhong Yang. Wearable and Ambient Sensor Fusion for the Characterisation of Human Motion. In IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pages 5505-5510, Taiwan, October 2010. [ bib | http | pdf ] [16] Julien Pansiot, Benny Lo, and Guang-Zhong Yang. Swimming Stroke Kinematic Analysis with BSN. In Proceedings of the IEEE International Conference on Body Sensor Networks (BSN), pages 153-158, Singapore, June 2010. [ bib | http | pdf ]

[16] Julien Pansiot, Benny Lo, and Guang-Zhong Yang. Swimming Stroke Kinematic Analysis with BSN. In Proceedings of the IEEE International Conference on Body Sensor Networks (BSN), pages 153-158, Singapore, June 2010. [ bib | http | pdf ]2009

[17] Julien Pansiot, Ahmed Elsaify, Benny Lo, and Guang-Zhong Yang. RACKET: Real-time Autonomous Computation of Kinematic Elements in Tennis. In IEEE 12th International Conference on Computer Vision Workshops (ICCV Workshops) - Fifth IEEE Workshop on Embedded Computer Vision, pages 773-779, Kyoto, Japan, 2009. (Best Paper Award) [ bib | http | pdf ]

[17] Julien Pansiot, Ahmed Elsaify, Benny Lo, and Guang-Zhong Yang. RACKET: Real-time Autonomous Computation of Kinematic Elements in Tennis. In IEEE 12th International Conference on Computer Vision Workshops (ICCV Workshops) - Fifth IEEE Workshop on Embedded Computer Vision, pages 773-779, Kyoto, Japan, 2009. (Best Paper Award) [ bib | http | pdf ] [18] Douglas McIlwraith, Julien Pansiot, James Ballantyne, Salman Valibeik, Ahmed Elsaify, and Guang-Zhong Yang. Structure Learning for Activity Recognition in Robotic Assisted Intelligent Environments. In IEEE/RSJ International Conference on Intelligent RObots and Systems (IROS), pages 4644-4649, St. Louis, MO, 2009. [ bib | http | pdf ]

[18] Douglas McIlwraith, Julien Pansiot, James Ballantyne, Salman Valibeik, Ahmed Elsaify, and Guang-Zhong Yang. Structure Learning for Activity Recognition in Robotic Assisted Intelligent Environments. In IEEE/RSJ International Conference on Intelligent RObots and Systems (IROS), pages 4644-4649, St. Louis, MO, 2009. [ bib | http | pdf ] [19] Rachel King, Douglas McIlwraith, Benny Lo, Julien Pansiot, Alison McGregor, and Guang-Zhong Yang. Body Sensor Networks for Monitoring Rowing Technique. In IEEE proceedings of the 6th International Workshop on Wearable and Implantable Body Sensor Networks (BSN), pages 251-255, Berkeley, CA, 2009. [ bib | http | pdf ]

[19] Rachel King, Douglas McIlwraith, Benny Lo, Julien Pansiot, Alison McGregor, and Guang-Zhong Yang. Body Sensor Networks for Monitoring Rowing Technique. In IEEE proceedings of the 6th International Workshop on Wearable and Implantable Body Sensor Networks (BSN), pages 251-255, Berkeley, CA, 2009. [ bib | http | pdf ] [20] Benny Lo, Julien Pansiot, and Guang-Zhong Yang. Bayesian Analysis of Sub-Plantar Ground Reaction Force with BSN. In IEEE proceedings of the 6th International Workshop on Wearable and Implantable Body Sensor Networks (BSN), pages 133-137, Berkeley, CA, 2009. [ bib | http | pdf ]

[20] Benny Lo, Julien Pansiot, and Guang-Zhong Yang. Bayesian Analysis of Sub-Plantar Ground Reaction Force with BSN. In IEEE proceedings of the 6th International Workshop on Wearable and Implantable Body Sensor Networks (BSN), pages 133-137, Berkeley, CA, 2009. [ bib | http | pdf ] [21] Mohamed ElHelw, Julien Pansiot, Douglas McIlwraith, Raza Ali, Benny Lo, Louis Atallah, and Guang-Zhong Yang. An Integrated Multi-Sensing Framework for Pervasive Healthcare Monitoring. In 3rd International Conference on Pervasive Computing Technologies for Healthcare (PervasiveHealth), 2009. (Best Paper Award) [ bib | http | pdf ]

[21] Mohamed ElHelw, Julien Pansiot, Douglas McIlwraith, Raza Ali, Benny Lo, Louis Atallah, and Guang-Zhong Yang. An Integrated Multi-Sensing Framework for Pervasive Healthcare Monitoring. In 3rd International Conference on Pervasive Computing Technologies for Healthcare (PervasiveHealth), 2009. (Best Paper Award) [ bib | http | pdf ]2008

[22] Julien Pansiot, Rachel King, Douglas McIlwraith, Benny Lo, and Guang-Zhong Yang. ClimBSN: Climber Performance Monitoring with BSN. In IEEE proceedings of the 5th International Workshop on Wearable and Implantable Body Sensor Networks 2008 (BSN), pages 33-36, Hong Kong, China, 2008. [ bib | http | pdf ]

[22] Julien Pansiot, Rachel King, Douglas McIlwraith, Benny Lo, and Guang-Zhong Yang. ClimBSN: Climber Performance Monitoring with BSN. In IEEE proceedings of the 5th International Workshop on Wearable and Implantable Body Sensor Networks 2008 (BSN), pages 33-36, Hong Kong, China, 2008. [ bib | http | pdf ] [23] Douglas McIlwraith, Julien Pansiot, Surapa Thiemjarus, Benny Lo, and Guang-Zhong Yang. Probabilistic Decision Level Fusion for Real-Time Correlation of Ambient and Wearable Sensors. In IEEE proceedings of the 5th International Workshop on Wearable and Implantable Body Sensor Networks 2008 (BSN), pages 117-120, Hong Kong, China, 2008. [ bib | http | pdf ]

[23] Douglas McIlwraith, Julien Pansiot, Surapa Thiemjarus, Benny Lo, and Guang-Zhong Yang. Probabilistic Decision Level Fusion for Real-Time Correlation of Ambient and Wearable Sensors. In IEEE proceedings of the 5th International Workshop on Wearable and Implantable Body Sensor Networks 2008 (BSN), pages 117-120, Hong Kong, China, 2008. [ bib | http | pdf ] [24] Surapa Thiemjarus, Julien Pansiot, Douglas McIlwraith, Benny Lo, and Guang-Zhong Yang. An Integrated Inferencing Framework for Context Sensing. In IEEE proceedings of the 5th International Conference on Information Technology and Applications in Biomedicine 2008 (ITAB), Shenzhen, China, 2008. [ bib | http | pdf ]

[24] Surapa Thiemjarus, Julien Pansiot, Douglas McIlwraith, Benny Lo, and Guang-Zhong Yang. An Integrated Inferencing Framework for Context Sensing. In IEEE proceedings of the 5th International Conference on Information Technology and Applications in Biomedicine 2008 (ITAB), Shenzhen, China, 2008. [ bib | http | pdf ]2007

[25] Julien Pansiot, Danail Stoyanov, Douglas McIlwraith, Benny Lo, and Guang-Zhong Yang. Ambient and Wearable Sensor Fusion for Activity Recognition in Healthcare Monitoring Systems. In IFMBE proceedings of the 4th International Workshop on Wearable and Implantable Body Sensor Networks 2007 (BSN), pages 208-212, Aachen, Germany, 2007. [ bib | http | pdf ]

[25] Julien Pansiot, Danail Stoyanov, Douglas McIlwraith, Benny Lo, and Guang-Zhong Yang. Ambient and Wearable Sensor Fusion for Activity Recognition in Healthcare Monitoring Systems. In IFMBE proceedings of the 4th International Workshop on Wearable and Implantable Body Sensor Networks 2007 (BSN), pages 208-212, Aachen, Germany, 2007. [ bib | http | pdf ] [26] Louis Atallah, Mohamed ElHelw, Julien Pansiot, Danail Stoyanov, Lei Wang, Benny Lo, and Guang-Zhong Yang. Behaviour Profiling with Ambient and Wearable Sensing. In IFMBE proceedings of the 4th International Workshop on Wearable and Implantable Body Sensor Networks 2007 (BSN), pages 133-138, Aachen, Germany, 2007. [ bib | http | pdf ]

[26] Louis Atallah, Mohamed ElHelw, Julien Pansiot, Danail Stoyanov, Lei Wang, Benny Lo, and Guang-Zhong Yang. Behaviour Profiling with Ambient and Wearable Sensing. In IFMBE proceedings of the 4th International Workshop on Wearable and Implantable Body Sensor Networks 2007 (BSN), pages 133-138, Aachen, Germany, 2007. [ bib | http | pdf ]2006

[27] Julien Pansiot, Danail Stoyanov, Benny Lo, and Guang-Zhong Yang. Towards Image-Based Modeling for Ambient Sensing. In IEEE proceedings of the 3rd International Workshop on Wearable and Implantable Body Sensor Networks 2006 (BSN), pages 195-198, Cambridge, MA, April 2006. [ bib | http | pdf ]

[27] Julien Pansiot, Danail Stoyanov, Benny Lo, and Guang-Zhong Yang. Towards Image-Based Modeling for Ambient Sensing. In IEEE proceedings of the 3rd International Workshop on Wearable and Implantable Body Sensor Networks 2006 (BSN), pages 195-198, Cambridge, MA, April 2006. [ bib | http | pdf ]2005

[28] Julien Pansiot, Benny Lo, and Guang-Zhong Yang. A Simulator for Distributed Ambient Intelligence Sensing. In IEE proceedings of the 2nd International Workshop on Wearable and Implantable Body Sensor Networks (BSN), pages 119, London, April 2005. [ bib | pdf ]

[28] Julien Pansiot, Benny Lo, and Guang-Zhong Yang. A Simulator for Distributed Ambient Intelligence Sensing. In IEE proceedings of the 2nd International Workshop on Wearable and Implantable Body Sensor Networks (BSN), pages 119, London, April 2005. [ bib | pdf ]2004

[29] Julien Pansiot, Paul Chapman, Warren Viant, and Peter Halkon. New Perspectives on Ancient Landscapes: A Case Study of the Foulness Valley. In Eurographics proceedings of the 5th International Symposium on Virtual Reality, Archaeology and Cultural Heritage, pages 251-260, Belgium, December 2004. [ bib | http | pdf ]

[29] Julien Pansiot, Paul Chapman, Warren Viant, and Peter Halkon. New Perspectives on Ancient Landscapes: A Case Study of the Foulness Valley. In Eurographics proceedings of the 5th International Symposium on Virtual Reality, Archaeology and Cultural Heritage, pages 251-260, Belgium, December 2004. [ bib | http | pdf ]Patents

2016

[30] Julien Pansiot, Edmond Boyer, and Lionel Reveret. System and method for three-dimensional depth imaging. Patent App. WO2016005688, January 2016. Patent App. WO2016005688 [ bib | http ]

[30] Julien Pansiot, Edmond Boyer, and Lionel Reveret. System and method for three-dimensional depth imaging. Patent App. WO2016005688, January 2016. Patent App. WO2016005688 [ bib | http ]Master & PhD theses

2009

[31] Julien Pansiot. Markerless Visual Tracking and Motion Analysis for Sports Monitoring. PhD Thesis, Imperial College London, Department of Computing, October 2009. [ bib | pdf ]

[31] Julien Pansiot. Markerless Visual Tracking and Motion Analysis for Sports Monitoring. PhD Thesis, Imperial College London, Department of Computing, October 2009. [ bib | pdf ]2004

[32] Julien Pansiot. Immersive Visualization and Interaction of Multidimensional Archaeological Data. Master's Thesis, The University of Hull, Department of Computer Science, September 2004. [ bib | pdf ]

[32] Julien Pansiot. Immersive Visualization and Interaction of Multidimensional Archaeological Data. Master's Thesis, The University of Hull, Department of Computer Science, September 2004. [ bib | pdf ]Extras

I am a keen marathon and ultramarathon runner (life-is-an-ultramarathon.org) and also enjoy hiking, rock climbing, paragliding, homebrewing, and photography.

I am a keen marathon and ultramarathon runner (life-is-an-ultramarathon.org) and also enjoy hiking, rock climbing, paragliding, homebrewing, and photography.

I am particularly involved in the Hardmoors race series in North Yorkshire. Aside from running it, I volunteered as marshal and race director and I have been the hardmoors110.org.uk webmaster for several years.

Contact

Tel: +33 (0)4 76 61 55 90